When I was about thirteen, the library was going to get ‘Calculus for the Practical Man.’ By this time I knew, from reading the encyclopedia, that calculus was an important and interesting subject, and I ought to learn it.

Richard P. Feynman, from What Do You Care What Other People Think?

Introduction

Calculus is an important branch of mathematics that deals with the methods for calculating derivatives and integrals of functions and using this information to study the properties of functions. It was independently invented by I. Newton and W. Leibniz in the 18 century and it was further developed by other great mathematicians in the centuries that follows (see Figure below).

It comprises two areas:

- Differential calculus

It concerns the study of the rate of variation of functions.

- Integral calculus

It concern the study of the area under functions.

Depending on the nature of the functions involved in the calculations, we can further distinguish between the single- and multi-variable calculus. In this chapter, the main concepts and methods of the single-variable calculus are summarised.

Functions

Definition 1: A single variable function from a set

to a set

,

is a rule that assigns a unique (single) element

to each element

(see Fig. 1). An infinite number of single variable functions can be generated from the combinations of arithmetic, exponential, logarithmic and trigonometric expressions. In the following paragraphs, we are going to review functions that are relevant to the solution of differential equations.

Sinusoidal function. Functions containing trigonometric expressions (also called sinusoidal function, oscillation, or signal) are particularly useful functions. They are usually defined in the time domain as . where the parameters (

) in the equations have the following meanings:

is the amplitude and it gives how high the graph

rises above the t-axis at its maximum values.

is the phase lag and it indicates the position of the maximum of the function.

is the angular frequency and it gives the number of complete oscillation of

in a time interval of length

.

The following useful quantities are associated with the previous parameters:

is the frequency of

and it represent the number of complete oscillation of

in a time interval of length 1.

is the time delay or time shift and it indicates how far the graph of the

has been shifted along the t-axis.

is the period and indicates the time required for one complete oscillation.

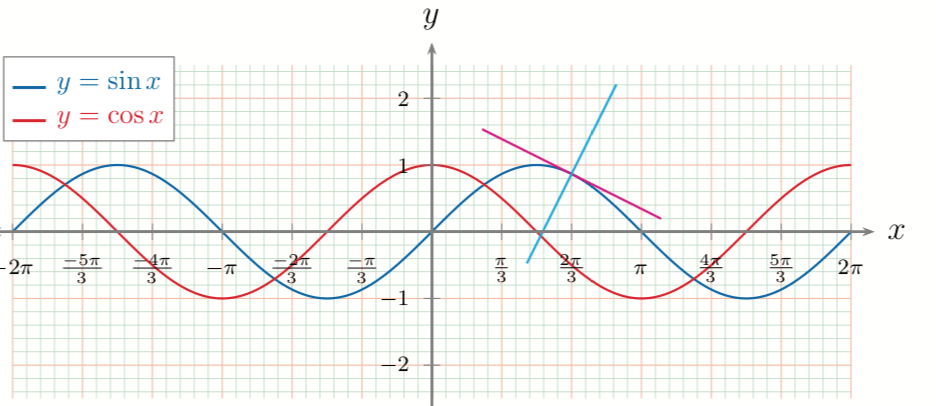

An example of sinusoidal functions is shown in Fig. 2.

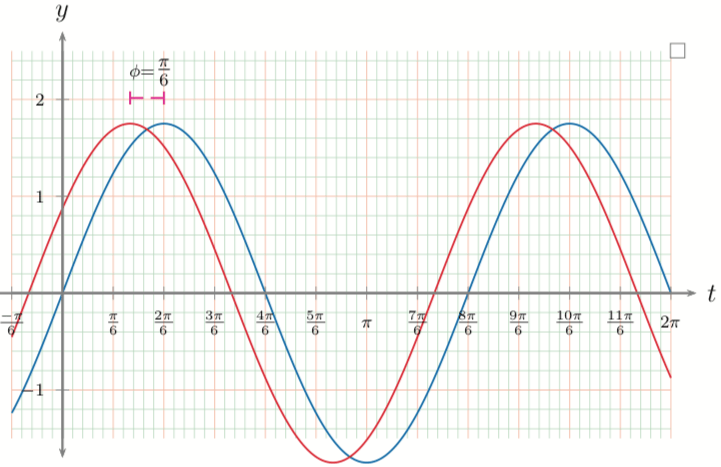

In Fig. 3, two periodic functions having the same set of parameters but with a phase shift of are shown.

Exponential and natural logarithm functions

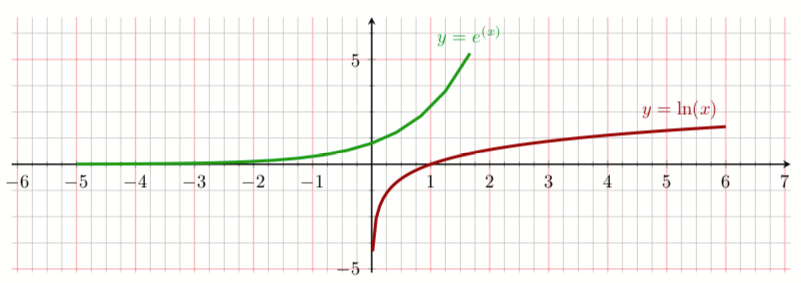

The exponential and natural logarithm} functions are also very useful since they solutions of many differential equations. In Fig. 4, the plots of the two functions are shown.

The transcendental number (the transcendental numbers are real or complex numbers that are not algebraic that is, they are not a root of a non-zero polynomial equation with integer (or, equivalently, rational) coefficients) is defined as:

The exponential function and the natural logarithm

are inverse functions therefore we can write

Some of the properties of the exponential functions are summarised in the following list

.

.

is never 0.

- If

then

and

.

- If

then

and

.

For any positive ,

grows much faster than any polynomial.

Examples:

;

.

For the exponential function, the usual rules of exponents apply

with the corresponding properties for the logarithmic function

Numerical approximation of functions

Complex functions can be approximated using a polynomial series called Taylor series. In this way, the function can be calculates using basic mathematical operations. To know more give a look to my blog.

The derivative of a function

Definition of the limit of a function

Suppose is defined on an open interval about

, except possibly at

itself. If

is arbitrarily close to

(as close to

as we like) for all

sufficiently close to

, we say that

approaches the limit

as

approaches

, and write:

The limit of a function provides us with the mathematical tool necessary for introducing the concept of the derivative of a function. The derivative of a function is defined as follows:

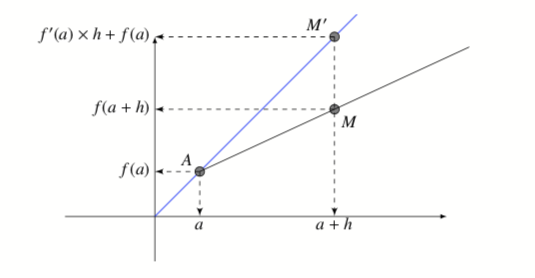

The graphical representation of the formula is reported in the Fig. 5. The derivative in the point of the function

is the slope of the tangent line to the curve passing for this point and it that approximate the function in the point

with the value at the point

.

Differentiation rules

Using the definition of derivative as the limit of the incremental ratio of the ratio, it is possible also to define the properties of the differentiation operations fiven as follow in term of rules.

The sum or difference rule

The derivative of the sum of and

Similarly, the derivative of the difference is

The product rule

The derivative of the product of and

is

The quotient rule

The derivative of the quotient of and

is

The chain rule

The derivative of the composition of and

is

The power rule

The derivative of a power of is given by

Examples of derivatives of elementary functions

Numerical approximation of functions

The trigonometric, transcendent or radical functions cannot be directly evaluated using the basic mathematics operations, for this reason for their numerical calculation is necessary to calculate as an approximate polynomial series. This can be easily accomplished using the Taylor series that are described in another blog.

The numerical differentiation

Calculation of derivative is a common task normally performed in the calculation of physical model when the evaluation of, for example, molecular forces (see my blog on the molecular dynamics methods) is required. Although. in this case, the calculation of derivatives is preferable to perform analytically from the corresponding potential energy functions, the numerical derivation is an easier and faster algorithm. The drawback is the accuracy and the pitfalls due to numerical approximations as for its definition it is necessary to subtract two numbers from each other that can differ only by a small amount.

The derivative in the point x of a function is defined as:

(19)

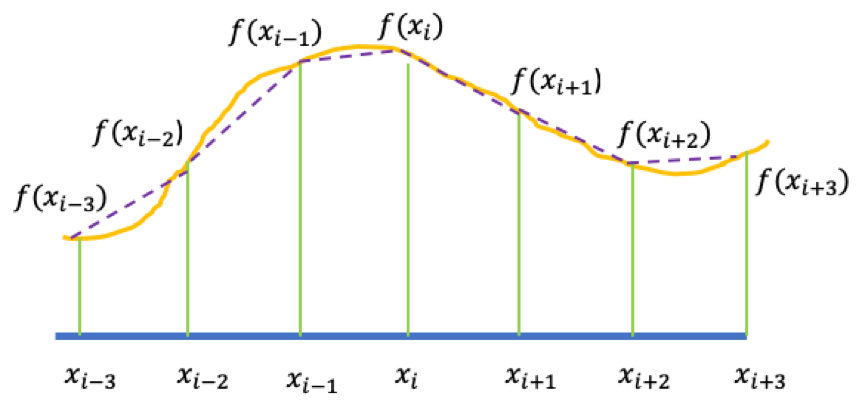

To calculate the numerical derivative as in the equation (19), we need first to discretize the derivative as the incremental ratio of single variable function on a grid of points equally spaced at the interval :

(20)

The function is known on a equally spaced lattice of

values (see Figure 6)

with $latex x_n=n h$ and

and

is the step size.

Depending the order and the point on the grid that we use for the approximate formula, we can use three simple different formulas to perform numerical derivation:

- Forward finite divided difference

- backward finite divided difference

- Center finite divided difference

In the equation (20) correspond to the forward formula. The error in the estimation of the exact (analytical) derivative is proportional to the step size (h). In particular, in the forward (as in the backward difference method) the error is on the square of h. The backward formula is given by

(21)

Centered Difference Approximation of the First Derivative

A more accurate formula for the derivative is given by the so-called centered or symmetric difference approximation:

(22)

Programming the finite difference approximations of the First Derivative of a function

The formulas (20), (21) and (22) are easily implemented in the programming language as a function. For example, an implementation is awk language is the following.

[code language="cpp"]

#* diff.awk

#* Copyright (C) 2018 daniloroccatano

function f(x)

{

# Return the value of the equation in x

return (sin(x))

}

function derf(x)

{

# Return the value of the analytical derivative of the equation in f(x)

return (cos(x))

}

function fp_forw(h,x)

{

# Return the value of the numerical derivative of f(x) calculated using

# the forward formula

return (f(x+h)-f(x))/h

}

function fp_back(h,x)

# Return the value of the numerical derivative of f(x) calculated using

# the backward formula

{

return (f(x)-f(x-h))/h

}

function fp_sym(h,x)

# Return the value of the numerical derivative of f(x) calculated using

# the symmetric formula

{

return (f(x+h)-f(x-h))/(2*h)

}

BEGIN {

x=1.0

#

# Calculate and print the table with the difference between the numerical

# and analytical value of the derivative

#

print "---------------------------------------------------------"

print " h Symmetric Forward Backward "

print " 3-points 2-point 2-point "

print "---------------------------------------------------------"

h=0.05

# calculate the differences

diff= (fp_sym(h,x)- derf(x))

diff<0?diff1= (-diff):diff1=diff

diff= (fp_forw(h,x)- derf(x))

diff<0?diff2= (-diff):diff2=diff

diff= (fp_back(h,x)- derf(x))

diff<0?diff3= (-diff):diff3=diff

printf "%10.5f %10.5f %10.5f %10.5f\n", h, diff1, diff2, diff3

}

[/code]Higher derivatives

A better estimation of first derivative can be obtained by considering more points. In this way, the derivative can be calculated from the polynomial approximation of the function among the different point. 4-and 5- points approximation can be derived considering the following Taylor expansions:

Higher derivative formulas can be constructed by taking the appropriate combination of the Taylor expansion for and

intervals. In following Table, 4- and 5-point formulas for derivatives up to the 4thorder are shown.